1. Introduction: The Era of Collaborative Intelligence

For years, the industry focused on making Large Language Models (LLMs) smarter. In 2026, the focus has shifted from the intelligence of the model to the intelligence of the system. Traditional AI implementations function as 'Lone Wolves'—single agents trying to handle research, analysis, and execution simultaneously. This often leads to cognitive overload and increased hallucinations.

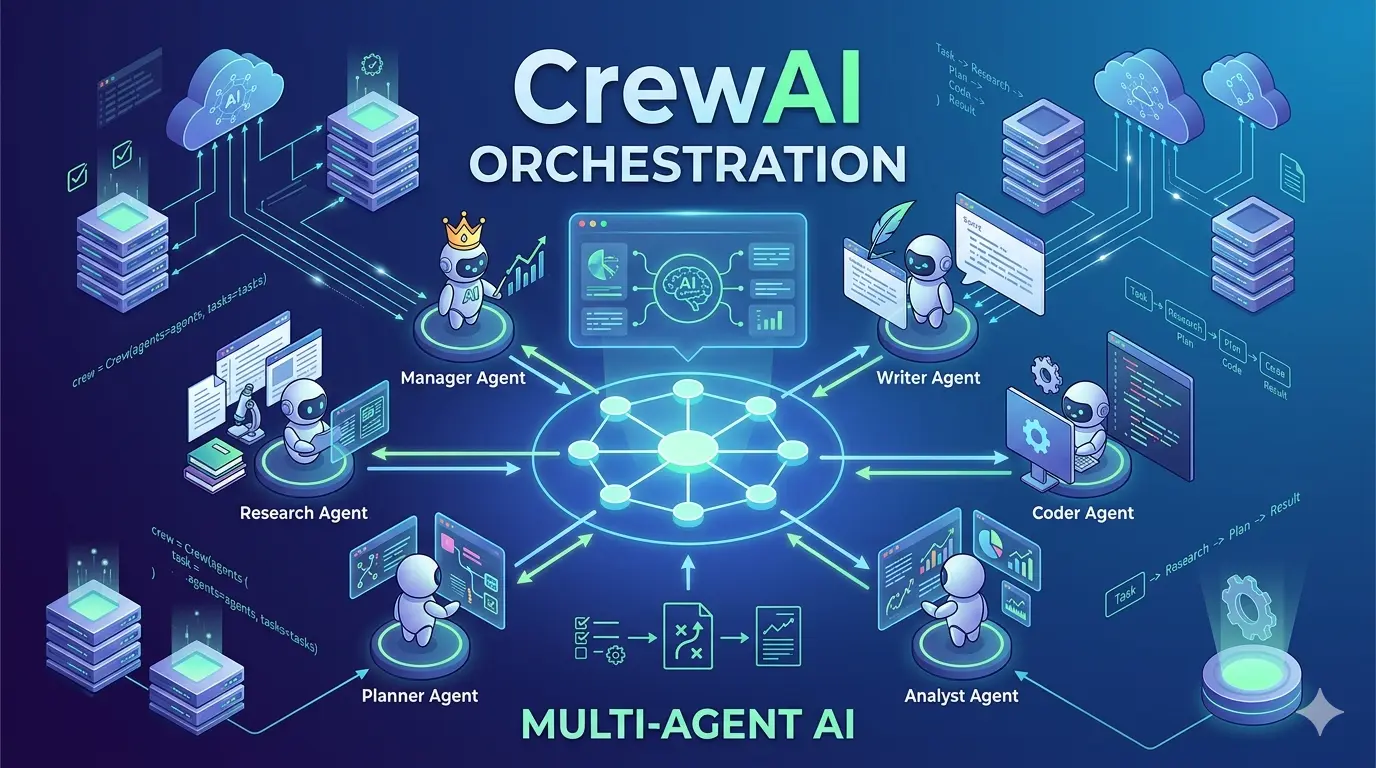

CrewAI disrupts this paradigm by introducing Multi-Agent Orchestration. By breaking complex objectives into specialized roles, CrewAI allows developers to build systems where agents collaborate, challenge each other, and delegate tasks. This guide explores how to move from simple automation to 'Agentic Workflows' that mirror the dynamics of a high-performing human team.

2. What is CrewAI? (Defining the Agentic Stack)

CrewAI is an open-source framework designed to orchestrate role-playing, autonomous AI agents. Unlike simple chatbots, CrewAI agents are 'Agentic'—they don't just talk; they act.

At its core, CrewAI allows you to define a Backstory, a Goal, and a Set of Tools for each agent. This role-based approach ensures that a 'Senior Research Analyst' agent focuses on data integrity, while a 'Technical Writer' agent focuses on clarity and narrative flow. By separating concerns, you achieve a level of precision that single-agent systems simply cannot match. It is built on Python, making it a natural fit for the modern AI stack alongside LangChain and Autogen.

3. The Architecture of a Multi-Agent System

The CrewAI architecture is a sophisticated hierarchy designed to manage complexity without sacrificing autonomy. It operates through four distinct layers:

- The Logic Layer (Flows): This is the 'CEO' of the operation. Flows manage the state, conditional logic (if/then branching), and the sequence of events. They ensure the workflow remains predictable.

- The Orchestration Layer (Crews): This is where agents are grouped. A Crew manages how information is shared and how tasks are handed off between agents.

- The Execution Layer (Agents): The individual 'workers.' Each agent is powered by an LLM (GPT-4o, Claude 3.5, or local Llama 3 models) and possesses its own 'Short-Term Memory' and 'Long-Term Memory'.

- The Capability Layer (Tools): External functions that agents can invoke. Whether it's a Google Search, a database query, or a custom API call, tools allow agents to interact with the real world.

4. Core Concepts: The Pillars of CrewAI

4.1 Agents: The Cognitive Workers

In CrewAI, an agent is more than a prompt. It is a configuration of Role, Goal, and Backstory. The Backstory is particularly critical; it provides the psychological context that guides the agent’s decision-making process. For example, telling an agent it is a 'Skeptical Auditor' will result in much higher accuracy in data validation than a generic 'Assistant' prompt.

4.2 Crews: Collaborative Structures

A Crew defines the Process Strategy. You can choose between Sequential (Task A -> Agent 1, then Task B -> Agent 2) or Hierarchical (A Manager Agent oversees the team and decides who does what). The Hierarchical process is a game-changer for complex projects, as the 'Manager' agent acts as a quality control filter.

4.3 Flows: Stateful Orchestration

Introduced to handle long-running tasks, Flows allow you to maintain state across different sessions. This is essential for enterprise applications where a task might take 30 minutes to complete and requires several 'checkpoints' or human-in-the-loop approvals.

4.4 Tasks and Tools: The 'What' and the 'How'

Tasks are discrete units of work with a defined 'Expected Output.' Tools are the interfaces. CrewAI supports Tool Delegation, meaning an agent can pass a tool to another agent if it believes the other agent is better suited for the sub-task.

5. How CrewAI Works: The Lifecycle of a Task

Execution in CrewAI follows a structured lifecycle:

- Decomposition: The Flow or Manager Agent breaks a high-level Goal into smaller, actionable Tasks.

- Assignment: Tasks are assigned based on the 'Role' and 'Tools' of the available Agents.

- Execution & Tooling: Agents execute their tasks. If an agent hits a roadblock, it can 'Collaborate' by asking another agent for input or clarification.

- Validation: The output is validated against the 'Expected Output' definition. If it fails, the task is sent back for a 'Retry'.

- Synthesis: The Crew aggregates all task outputs into a final, structured response (often in Markdown or JSON).

6. Real-World Applications (2026 Industry Use Cases)

- Automated Software Engineering: A 'Lead Architect' agent creates a system design, a 'Developer' agent writes the code, and a 'QA Engineer' agent writes and runs the tests. This reduces bugs in initial builds by up to 40%.

- Investment Research: One agent scrapes financial news, another parses SEC filings, and a third 'Risk Analyst' agent synthesizes the data into a 'Buy/Sell' recommendation.

- Content Marketing Pipelines: From SEO keyword research to drafting, image generation prompts, and social media distribution—all handled by a specialized crew working in parallel.

- Customer Support Escalation: Using a Flow to determine if a query can be handled by an 'FAQ Agent' or needs to be escalated to a 'Technical Support Agent' with access to internal database tools.

7. Advantages and Challenges

The Advantages:

- Scalability: It’s easier to add a 'Legal Agent' to a crew than it is to rewrite a 5,000-word prompt to include legal knowledge.

- Reliability: Multi-agent verification reduces the 'Self-Correction' failure found in single models.

- Modularity: You can swap out the LLM for individual agents. Use a cheap GPT-3.5 for simple tasks and a powerful Claude 3.5 Opus for the 'Manager' role.

The Challenges:

- Token Consumption: Running 5 agents results in higher API costs than one. Optimization is key.

- Latency: Multi-step reasoning takes more time. For real-time chat, sequential crews can feel slow.

- Complexity: Managing state and debugging 'Agent Loops' (where agents keep delegating to each other) requires significant Python expertise.

8. CrewAI vs. The Competition

While Microsoft AutoGen is excellent for conversational AI and LangGraph offers granular control over graph cycles, CrewAI wins on developer experience (DX). It provides a more intuitive, 'Human-First' abstraction. It feels like managing a team, not writing a complex state machine. For startups and enterprises looking for a balance between power and ease of use, CrewAI is currently the market leader.

9. Frequently Asked Questions

Q: Can I run CrewAI locally? Yes. By using tools like Ollama, you can point your CrewAI agents to local models (Llama 3, Mistral), ensuring complete data privacy and zero API costs.

Q: How do I handle human intervention?

CrewAI supports 'Human-in-the-loop.' You can set a task to human_input=True, which pauses the agent and waits for your approval or feedback before proceeding.

Q: Is it ready for production? Absolutely. With the introduction of CrewAI Enterprise, features like telemetry, monitoring, and agent-level security guardrails have made it the go-to for production-grade agentic systems.

10. Conclusion

CrewAI isn't just a framework; it's a blueprint for the future of work. As AI becomes more integrated into our professional lives, the ability to build 'Digital Workforces' that can think, act, and collaborate will be the ultimate competitive advantage. Whether you are building a simple research tool or a complex automated enterprise, the principles of agentic orchestration will be at the heart of your success. Start small, define your roles clearly, and watch your crews transform your productivity.